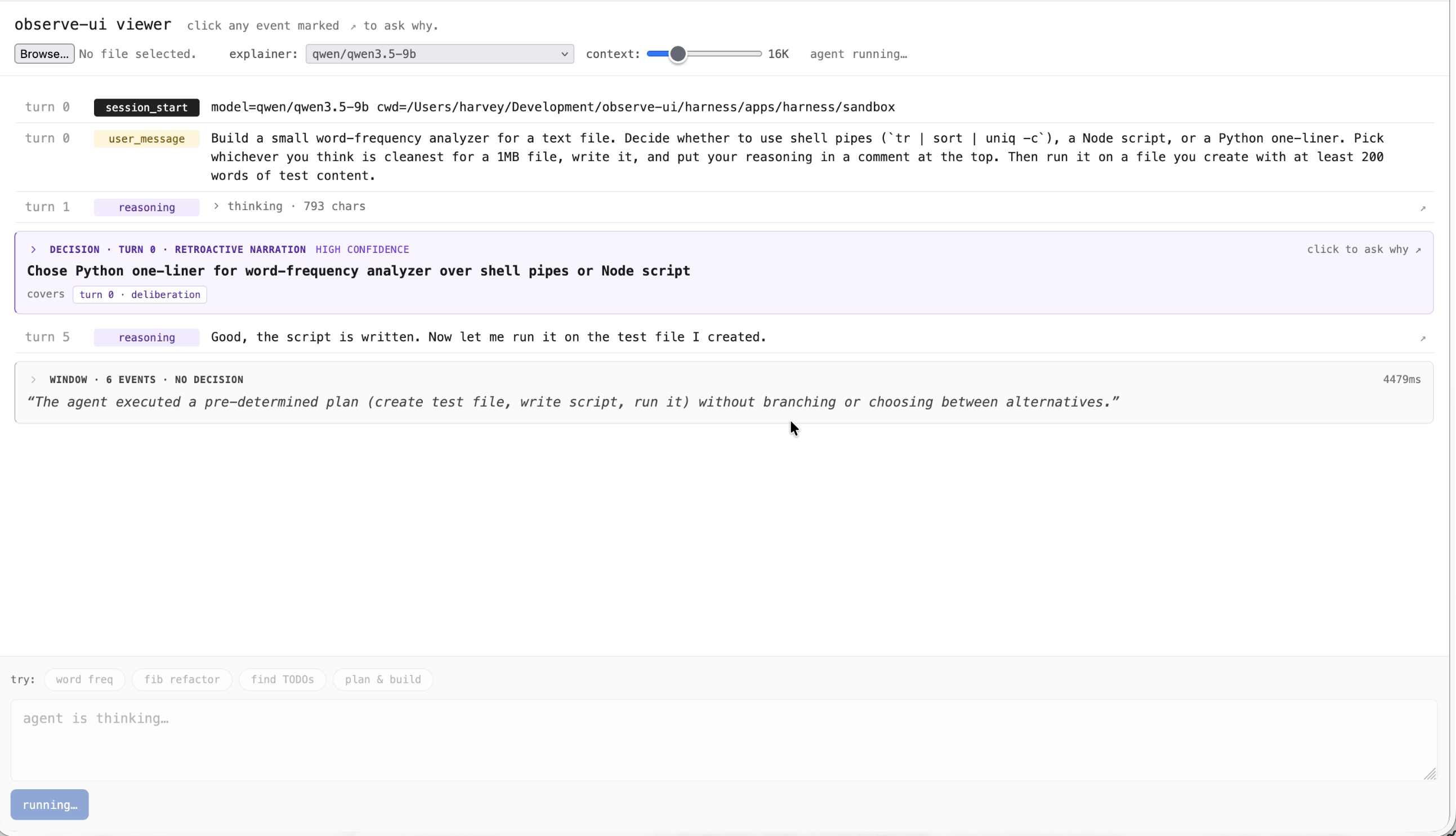

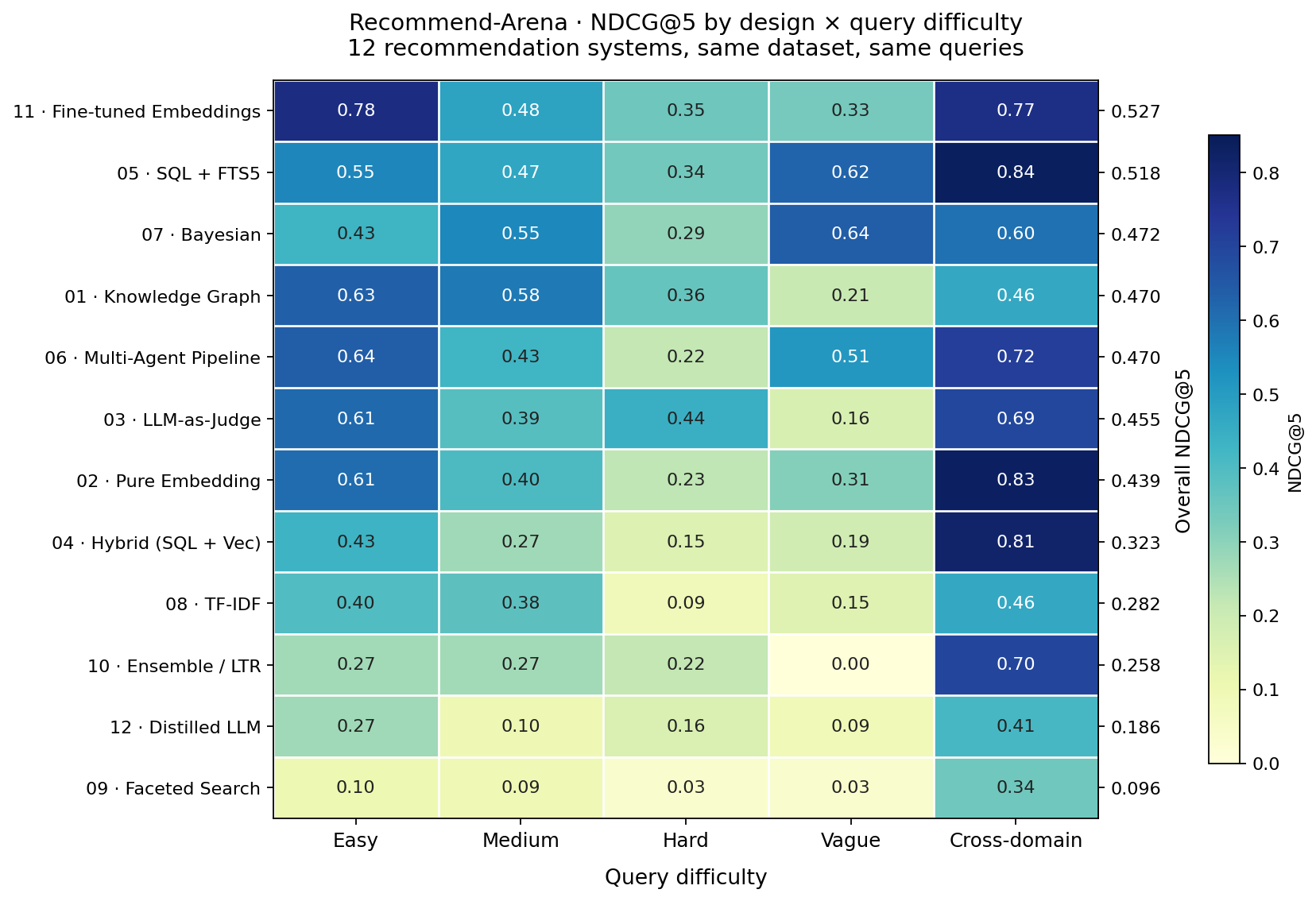

observe-ui

Decision-legibility surface for autonomous coding agents (live cockpit + retrospective blame).

/ prototypes + experiments

Solo projects I've been building with AI tools — some made in a single sitting, others over a week or two. Mostly Claude Code. I try stuff out and keep iterating on what sticks — little tools I actually use, prototypes still in motion, patterns I'm figuring out as I go.

Decision-legibility surface for autonomous coding agents (live cockpit + retrospective blame).

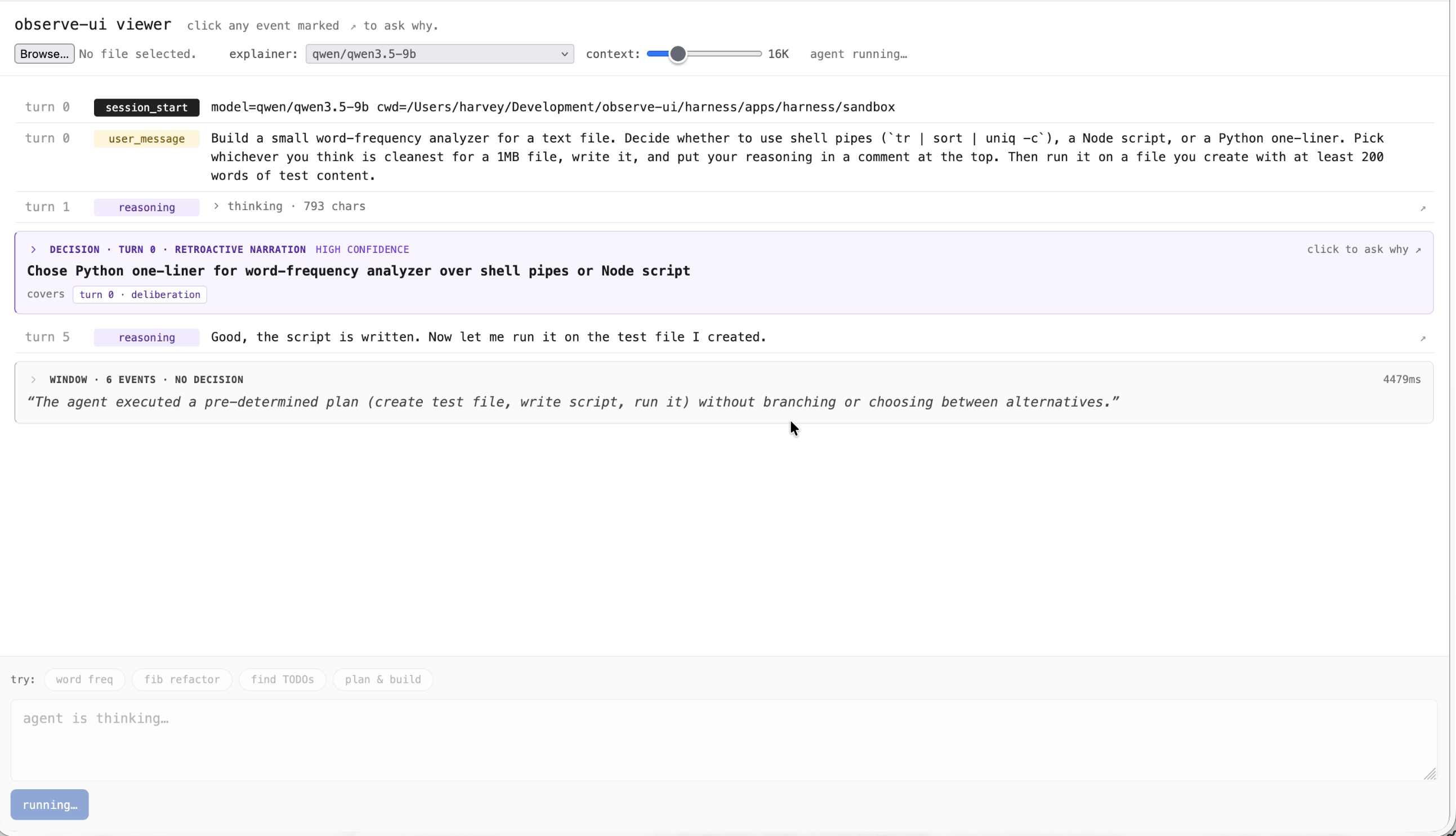

Twelve recommendation-system designs benchmarked head-to-head on the same dataset and ground-truth queries.

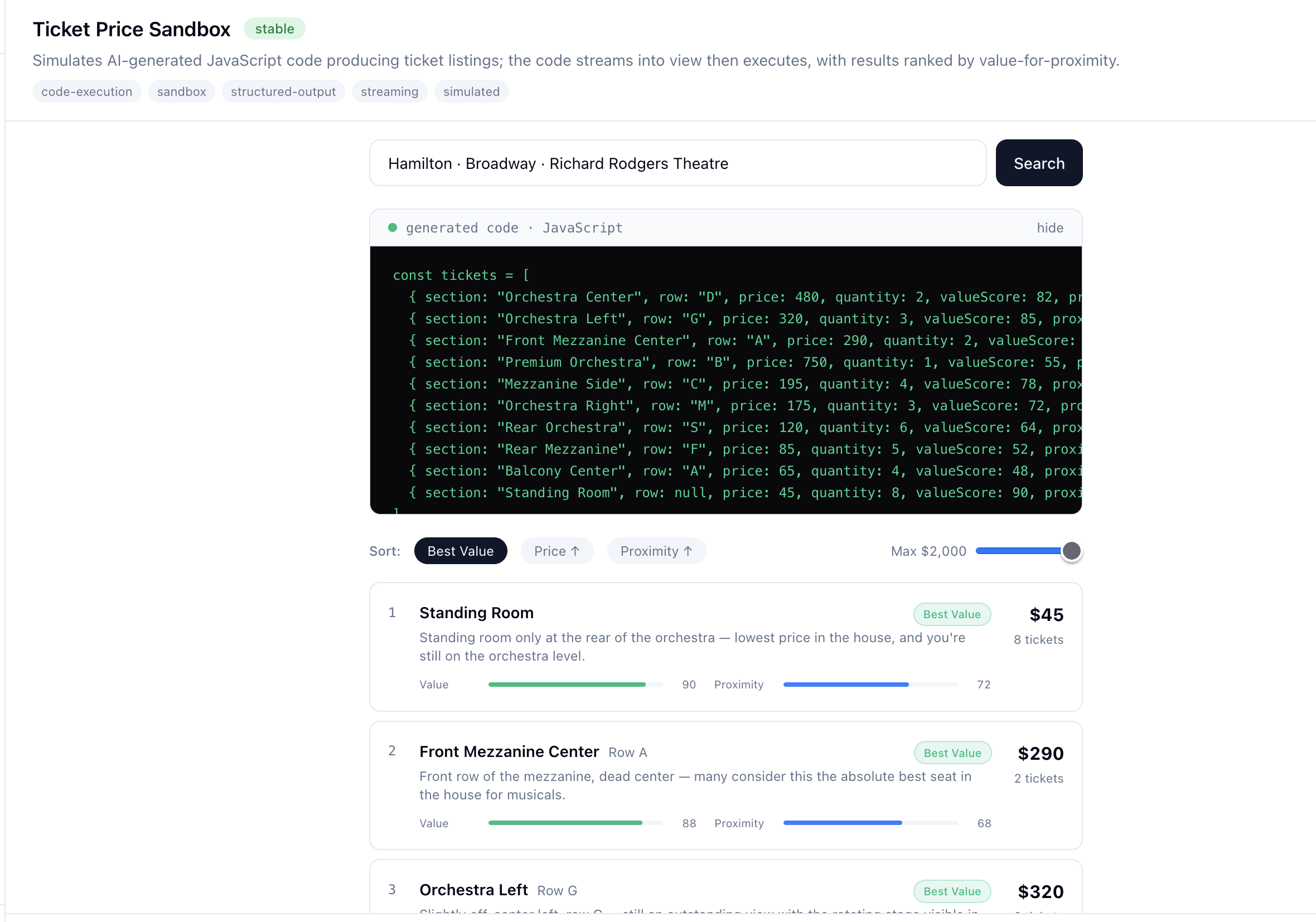

A living catalog of AI UX patterns — agent orchestration, tool-call visualization, adaptive cards and UI.

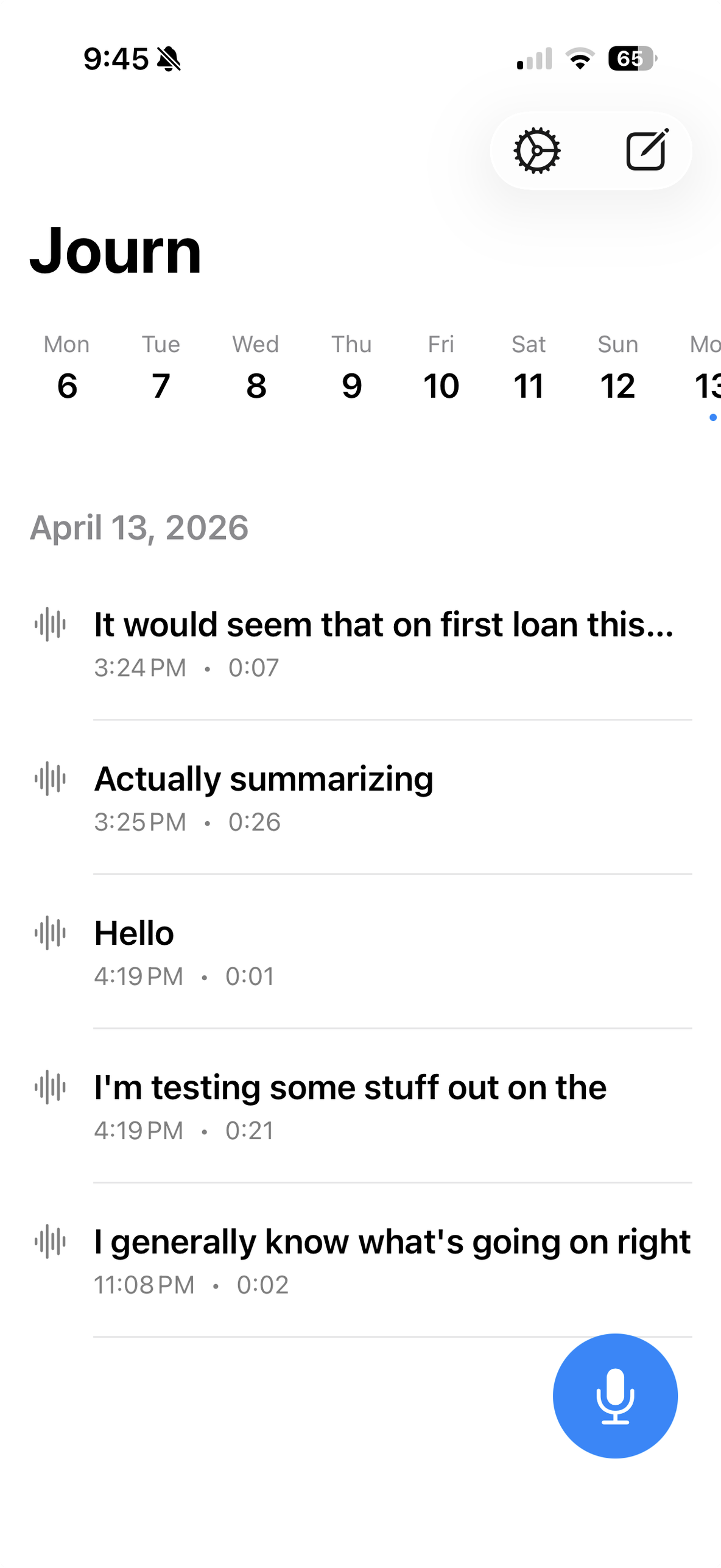

All-local, encrypted, voice-first journaling for iOS and macOS from a single SwiftUI codebase.

Self-guided iOS app for exposure therapy, built around experiments rather than sessions.

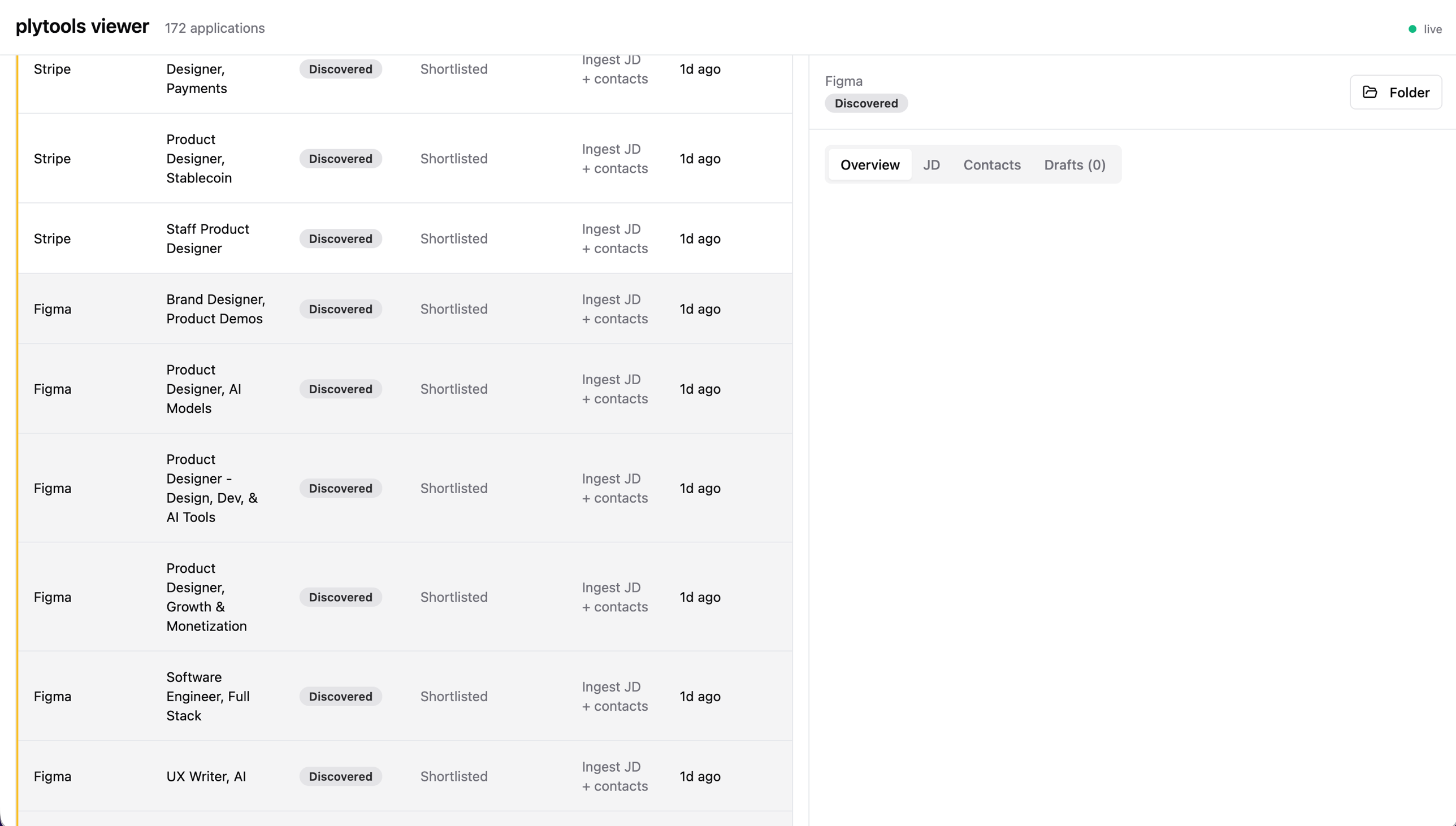

CLI for using Claude Code to brainstorm and track job applications.

Browser-based running utilities — pace converter, heart rate zones, race predictor. Everything runs client-side.